EISM

Computer vision uses neural networks and deep learning to allow computer programs to “see” and analyze data in a semi-automated way. It is no exaggeration to say that computer vision technology is having a dramatic impact on virtually every industry, from retail to agriculture to sports and everything in between. Below is a non-exhaustive list of examples and use cases that EISM staff can help you develop and implement in your organization:

Retail Industry

Customer Tracking

Strategically-placed counting devices throughout a retail store can gather data through machine learning processes about where customers spend their time, and for how long. Customer analytics can improve retail stores’ understanding of consumer interaction and improve store layouts for optimized selling.

People Counting

Computer Vision algorithms are trained with data examples to detect humans and count them as they are detected. Such people counting technology is useful for stores to collect data about their stores’ success and can also be applied in situations regarding COVID-19 where a limited number of people are allowed in a store at once.

Theft Detection

Retailers can detect suspicious behavior such as loitering or accessing areas that are off-limits using computer vision algorithms that are autonomously analyzing the scene.

Waiting Time Analytics

To prevent impatient customers and endless waiting lines, retailers are implementing queue detection technology. Queue detection uses cameras to track and count the number of shoppers in a line. Once a threshold of customers has been reached, the system sounds an alert for clerks to open new checkouts.

Social Distance

To ensure safety precautions are being followed, companies are using distance detectors. A camera tracks employee or customer movement and uses depth sensors to assess the distance between them. Depending on their position, the system draws a red or green circle around the person.

Productivity Analytics

Productivity analytics track the impact of workplace change, how employees spend their time and resources and implement various tools. Such data can provide valuable insight into time management, workplace collaboration, and employee productivity.

Quality Management

Quality management systems ensure an organization reaches customer’s requirements by addressing its policies, procedures, instructions, internal processes to reach an overall consumer satisfaction rate.

Skill training

Another application field of vision systems is optimizing assembly line operations in industrial production. The evaluation of human action can help to construct standardized action models related to different operation steps, as well as to evaluate the performance of trained workers. Automatically assessing the action quality of workers can be beneficial by improving working performance, promoting productive efficiency (LEAN optimization), and, more importantly, discovering dangerous actions before damage occurs.

Health Care

Cancer Detection

Machine learning is incorporated in medical industries for purposes such as breast and skin cancer detection. Image detection allows scientists to pick out slight differences between cancerous and non-cancerous images, and diagnose data from magnetic resonance imaging (MRI) scans and inputted photos as malignant or benign.

Cell Classification

Machine Learning in medical use cases was used to classify T-lymphocytes against colon cancer epithelial cells with high accuracy. ML is expected to significantly accelerate the process of disease identification regarding colon cancer efficiently and at little to no cost post-creation.

Movement Analysis

Neurological and musculoskeletal diseases such as oncoming strokes, balance and gait problems can be detected using deep learning models and computer vision even without doctor analysis. Pose Estimation computer vision applications that analyze patient movement assist doctors in diagnosing a patient with ease and increased accuracy.

Mask Detection

Masked Face Recognition is used to detect the use of masks and protective equipment to limit the spread of coronavirus. Computer Vision systems help countries to implement masks as a control strategy to contain the spread of coronavirus disease. Private companies such as Uber have created computer vision features such as face detection to be implemented in their mobile apps to detect whether passengers are wearing masks or not. Programs like this make public transportation safer during the coronavirus pandemic.

Tumor Detection

Brain tumors can be seen in MRI scans and are often detected using deep neural networks. Tumor detection software utilizing deep learning is crucial to the medical industry because it can detect tumors at high accuracy to help doctors make their diagnoses. New methods are constantly being developed to heighten the accuracy of these diagnoses.

Disease Progression Score

Computer vision can be used to identify patients that are critically ill to direct medical attention (critical patient screening). People infected with COVID-19 are found to have more rapid respiration. Deep Learning with depth cameras can be used to identify abnormal respiratory patterns to perform an accurate and unobtrusive yet large-scale screening of people infected with the COVID-19 virus.

Healthcare and rehabilitation

Physical therapy is important for the recovery training of stroke survivors and sports injury patients. Since supervision by a professional provided by a hospital or medical agency is expensive, home training with a vision-based rehabilitation application is preferred because it allows people to practice movement training privately and economically. In computer-aided therapy or rehabilitation, human action evaluation can be applied to assist patients in training at home, guide them to perform actions properly, and prevent them from further injuries.

Medical Skill Training

Computer Vision applications are used for assessing the skill level of expert learners on self-learning platforms. For example, simulation-based surgical training platforms have been developed for surgical education. The technique of action quality assessment makes it possible to develop computational approaches that automatically evaluate the surgical students’ performance. Accordingly, meaningful feedback information can be provided to individuals and guide them to improve their skill levels

Transportation

Vehicle Classification

Computer Vision applications for automated vehicle classification have a long history. The technologies for automated vehicle classification have been evolving over decades. With rapidly growing affordable sensors such as closed‐circuit television (CCTV) cameras, light detection and ranging (LiDAR), and even thermal imaging devices, vehicles can be detected, tracked and categorized in multiple lanes simultaneously. The accuracy of vehicle classification can be improved by combining multiple sensors such as thermal imaging, LiDAR imaging, and RGB visible cameras. There are multiple specializations, for example, a deep-learning-based computer vision solution for construction vehicle detection has been employed for purposes such as safety monitoring, productivity assessment, and managerial decision-making.

Moving Violations Detection

Law enforcement agencies and municipalities are increasing the deployment of camera‐based roadway monitoring systems with the goal of reducing unsafe driving behavior. There is increasing use of computer vision techniques for automating the detection of violations such as speeding, running red lights or stop signs, wrong‐way driving, and making illegal turns.

Traffic Flow Analysis

Analysis of traffic flow has been studied extensively for intelligent transportation systems (ITS) using both invasive methods (tags, under-pavement coils, etc.) and non-invasive methods such as cameras. With the rise of computer vision and AI, video analytics can now be applied to the ubiquitous traffic cameras, which can generate vast impact in ITS and smart city. The traffic flow can be observed using computer vision means and measure some of the variables required by traffic engineers.

Parking Occupancy Detection

Visual parking space monitoring is used with the goal of parking lot occupancy detection. Computer vision applications power decentralized and efficient solutions for visual parking lot occupancy detection based on a deep Convolutional Neural Network (CNN). There exist multiple datasets for parking lot detection such as PKLot and CNRPark-EXT. Furthermore, video-based parking management systems have been implemented using stereoscopic imaging (3D) or thermal cameras.

Automated License Plate Recognition

Many modern transportations and public safety systems depend on the ability to recognize and extract license plate information from still images or videos. Automated license plate recognition (ALPR) has in many ways transformed the public safety and transportation industries, helping enable modern tolled roadway solutions, providing tremendous operational cost savings via automation, and even enabling completely new capabilities in the marketplace (e.g., police cruiser‐mounted license plate reading units). OpenALPR is a popular automatic number-plate recognition library, based on character recognition on images or video feeds of vehicle registration plates.

Vehicle re-identification

With improvements in person re-identification, smart transportation and surveillance systems aim to replicate this approach for vehicles using vision-based vehicle re-identification. Conventional methods to provide a unique vehicle ID are usually intrusive (in-vehicle tag, cellular phone or GPS). For controlled settings such as at a toll booth, automatic license plate recognition (ALPR) is probably the best suitable technology for accurate identification of individual vehicles. However, license plates are subject to change and forgery, and ALPR cannot reflect salient specialties of the vehicles such as marks or dents. Non-intrusive methods such as image-based recognition have high potential and demand but are still far from mature for practical usage. Most existing vision-based vehicle re-identification techniques are based on vehicle appearance such as color, texture and shape. As of today, the recognition of subtle distinctive features such as vehicle make or year model is still an unresolved challenge.

Pedestrian Detection

The detection of pedestrians is crucial to intelligent transportation systems, it ranges from autonomous driving to infrastructure surveillance, traffic management, transit safety and efficiency, and law enforcement. Pedestrian detection involves many types of sensors, such as traditional CCTV or IP cameras, thermal imaging devices, near‐infrared imaging devices, and onboard RGB cameras. Pedestrian detection algorithms can be based on infrared signatures, shape features, gradient features, machine learning, or motion features. Pedestrian detection relying on deep convolution neural networks has made significant progress, even with the detection of heavily occluded pedestrians.

Traffic Sign Detection

Computer Vision applications are used for traffic sign detection and recognition. Vision techniques are applied to segment traffic signs from different traffic scenes (using image segmentation) and employ deep learning algorithms for the recognition and classification of traffic signs.

Collision Avoidance Systems

Vehicle detection and lane detection form an integral part of most advanced driver assistance systems (ADAS). Deep neural networks have been used recently to investigate deep learning and the use of it for autonomous collision avoidance systems.

Road Condition Monitoring

Applications for computer-vision-based defect detection are developed to monitor roads and other civil infrastructure. Pavement condition assessment provides information to make more cost-effective and consistent decisions regarding the management of roads. Generally, pavement distress inspections are performed using sophisticated data collection vehicles, but this is starting to change. A Deep Learning approach to develop an asphalt pavement condition index is able to provide a human-independent, inexpensive, efficient, and safe way to maintain roads. A related used detects road potholes to manage road maintenance and reduce the number of related vehicle accidents.

Infrastructure Condition Assessment

To ensure the safety and the serviceability of civil infrastructure it is essential to visually inspect and assess its physical and functional condition. Systems for Computer Vision-based civil infrastructure inspection and monitoring are used to automatically convert image and video data into actionable information. Computer Vision inspection applications are used to identify structural components, characterize local and global visible damage, and detect changes from a reference image. Such monitoring applications include static measurement of strain and displacement and dynamic measurement of displacement for modal analysis.

Driver Attentiveness Detection

Distracted driving – such as daydreaming, cell phone usage and looking at something outside the car – accounts for a large proportion of road traffic fatalities worldwide. Artificial intelligence is used to understand driving behaviors, find solutions to mitigate road traffic incidents. Road-surveillance technologies are used to observe passenger compartment violations, for example in deep learning based seat belt detection in road surveillance. In‐vehicle driver monitoring technologies focus on visual sensing, analysis, and feedback. Driver behavior can be inferred both directly from inward driver‐facing cameras and indirectly from outward scene‐facing cameras or sensors. Techniques based on driver-facing video analytics detect the face and eyes with algorithms for gaze direction, head pose estimation, and facial expression monitoring. Face detection algorithms have been able to detect attentive vs. inattentive faces. Deep Learning algorithms are able to detect differences between eyes that are focused and unfocused, as well as signs of driving under the influence. There are multiple vision-based applications for real-time distracted driver posture classification with multiple deep learning methods (RNN and CNN) used in driver distraction detection.

Sports

Player Pose Tracking

AI vision can be used to recognize patterns between human body movement and pose over multiple frames in video footage or real-time video streams. Human pose estimation has been applied to real-world videos of swimmers where single stationary cameras film above and below the water surface. Those video recordings can be used to quantitatively assess the athletes’ performance without manually annotate the body parts in each video frame. Convolutional Neural Networks are used to automatically infer the required pose information and detect the swimming style of an athlete.

Markerless Motion Capture

Cameras can be used to track the motion of the human skeleton without using traditional optical markers and specialized cameras. This is essential in sports capture, where players cannot be burdened with additional performance capture attire or devices.

Objective Athlete Performance Assessment

Automated detection and recognition of sport-specific movements overcome the limitations associated with manual performance analysis methods. Computer Vision data inputs can be used in combination with the data of body-worn sensors and wearables. Popular use cases are swimming analysis, golf swing analysis, over-ground running analytics, alpine skiing, and the detection and evaluation of cricket bowling.

Multi-Player Pose Tracking

Using Computer Vision algorithms, the pose and movement of multiple team players can be calculated from both monocular (single-camera footage) and multi-view (footage of multiple cameras) sports video datasets. The potential use of estimating the 2D or 3D Pose of players in sports is wide-reaching and includes performance analysis, motion capture, and novel applications in broadcast and immersive media.

Stroke Recognition

Computer vision applications can be used to detect and classify strokes (for example to classify strokes in table tennis). Recognition or classification of movements involves further interpretations and labeled predictions of the identified instance (for example differentiating tennis strokes as forehand or backhand). Stroke recognition aims to provide tools for teachers, coaches, and players to analyze table tennis games and to improve sports skills more efficiently.

Near Real-Time Coaching

Computer Vision based sports analytics help to improve resource efficiency and reduce feedback times for time-constraint tasks. Coaches and athletes involved in time-intensive notational tasks, including post-swim race analysis, can benefit from rapid objective feedback before the next race in the event program.

Sports Team Behaviors Analysis

Analysts in professional team sport regularly perform analysis to gain strategic and tactical insights into player and team behavior (identify weaknesses, assess performance and improvement potentials). However, manual video analysis is typically a time-consuming process, where the analysts need to memorize and annotate scenes. Computer Vision techniques can be used to extract trajectory data from video material and apply movement analysis techniques to derive relevant team sport analytic measures for region, team formation, event, and player analysis (for example in soccer team sports analysis).

Automated Media Coverage

AI vision technology can use video footage to interpret sports games and transmitting them to the media houses without necessarily going there with physical cameras. For instance, baseball has gained this advantage in the last few years with game news coverage automation.

Ball Tracking

Ball trajectory data are one of the most fundamental and useful information in the evaluation of players’ performance and analysis of game strategies. Hence, tracking of ball movement is an application of deep and machine learning to detect and then track the ball in video frames. Ball tracking is important in sports with large fields (e.g. Football) to help newscasters and analysts to interpret and analyze a sports game and tactics faster.

Goal-Line Technology

Camera-based systems can be used to determine if a goal has been scored or not to support the decision-making of referees. Different from sensors, the vision-based method is noninvasive and does not require changes to the typical football devices. Such Goal-Line Technology systems are based on high-speed cameras whose images are used to triangulate the position of the ball. A ball detection algorithm that analyzes candidate ball regions in order to recognize the ball pattern.

Event Detection in Sports

Deep Learning can be used to detect complex events from unstructured videos, like scoring a goal in a football game, near misses, or other exciting parts of a game that do not result in a score. This technology can be used for real-time event detection in sports broadcasts, applicable to a wide range of field sports.

Self-training Feedback

Computer Vision based self-training systems for sports exercise is a recently emerging research topic. While self-training is essential in sports exercise, a practitioner may progress to a limited extent without the instruction of a coach. For example, a yoga self-training application aims to instruct the practitioner to perform yoga poses correctly, assisting in rectifying poor postures, and preventing injury. A self-training system gives instructions on how to adjust the body posture.

Automatic Highlight Generation

Producing sports highlights is labor-intensive work that requires some degree of specialization, especially in sports with a complex set of rules that is played for a longer time (eg. Cricket). An application example is automatic Cricket highlight generation using event-driven and excitement-based features to recognize and clip important events in a cricket match. Another application is the automatic curation of golf highlights using multimodel excitement features with Computer Vision.

Sports Activity Scoring

Deep Learning methods can be used for sports activity scoring for assessing the action quality of athletes (Deep Features for Sports Activity Scoring). Automatic sports activity scoring can be used in diving, figure skating, or vaulting (ScoringNet is a 3D CNN network application for sports activity scoring). For example, a diving scoring application works by assessing the quality score of a diving performance of an athlete: It matters whether the athlete’s feet are together and their toes are pointed straight throughout the whole diving process

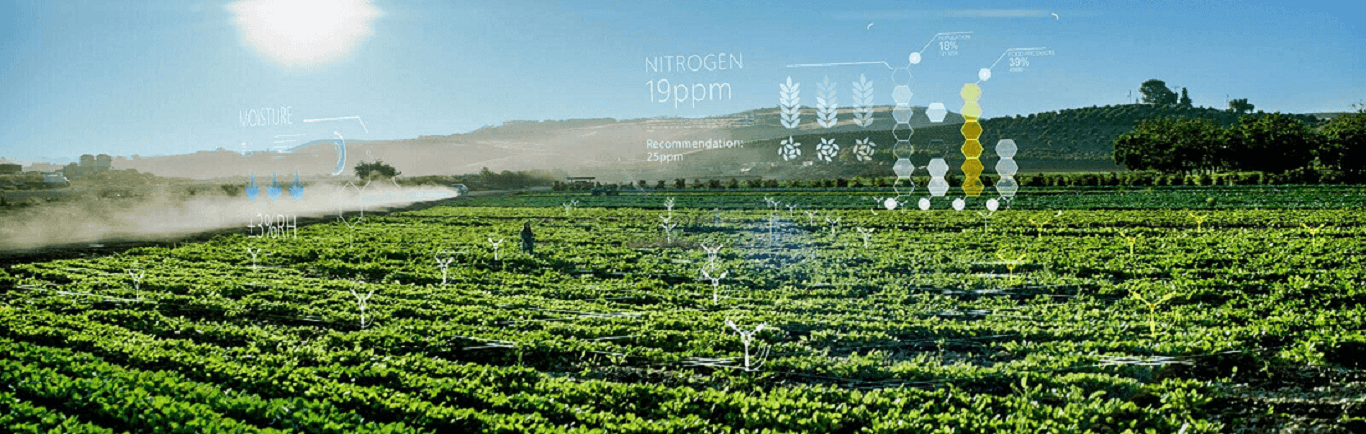

Agriculture

Crop Monitoring

The yield and quality of important crops such as rice and wheat determine the stability of food security. Traditionally, the monitoring of crop growth mainly relies on subjective human judgment and is not timely or accurate. Computer Vision applications allow to continuously and non-destructively monitor plant growth and the response to nutrient requirements. Compared with manual operations, the real-time monitoring of crop growth by applying computer vision technology can detect the subtle changes in crops due to malnutrition much earlier and can provide a reliable and accurate basis for timely regulation. Computer Vision applications can be used for the measurement of plant growth indicators or to determine the growth stage.

Flowering Detection

The heading date of wheat is one of the most important parameters for wheat crops. An automatic computer vision observation system can be used to determine the wheat heading period. Computer vision technology has the advantages of low cost, small error, high efficiency and good robustness and can be dynamically and continuously analyzed.

Plantation monitoring

In intelligent agriculture, image processing with drone images can be used to monitor palm oil plantations remotely. With geospatial orthophotos, it is possible to identify which part of the plantation land is fertile for planted crops. It was also possible to identify areas less fertile in terms of growth, and also part of plantation field that is not growing at all.

Insect Detection

Rapid and accurate recognition and counting of flying insects are of great importance, especially for pest control. Traditional manual identification and counting of flying insects is inefficient and labor-intensive. Vision-based systems allow the counting and recognition of flying insects (based on You Only Look Once (YOLO) object detection and classification).

Plant Disease Detection

Automatic and accurate estimation of disease severity is essential for food security, disease management, and yield loss prediction. The deep learning method avoids labor-intensive feature engineering and threshold-based image segmentation. Automatic image-based plant disease severity estimation using Deep convolutional neural network (CNN) applications were developed, for example to identify apple black rot.

Automatic weeding

Weeds are considered to be harmful plants in agronomy because they compete with crops to obtain the water, minerals and other nutrients in the soil. Spraying pesticides only in the exact locations of weeds greatly reduces the risk of contaminating crops, humans, animals and water resources. The intelligent detection and removal of weeds are critical to the development of agriculture. A neural network-based computer vision system can be used to identify potato plants and three different weeds for on-site specific spraying.

Automatic Harvesting

In traditional agriculture, there is a reliance on mechanical operations, with manual harvesting as the mainstay, which results in high costs and low efficiency. In recent years, with the continuous application of computer vision technology, high-end intelligent agricultural harvesting machines, such as harvesting machinery and picking robots based on computer vision technology, have emerged in agricultural production, which has been a new step in the automatic harvesting of crops. The main focus of harvesting operations is to ensure product quality during harvesting to maximize the market value. Computer Vision powered applications include picking cucumbers automatically in a greenhouse environment or the automatic identification of cherries in a natural environment.

Agricultural Product Quality Testing

The quality of agricultural products is one of the important factors affecting market prices and customer satisfaction. Compared to manual inspections, Computer Vision provides a way to perform external quality checks and achieve high degrees of flexibility and repeatability at a relatively low cost and with high precision. Systems based on machine vision and computer vision are used for rapid testing of sweet lemon damage or non-destructive quality evaluation of potatoes.

Irrigation Management

Soil management based on using technology to enhance soil productivity through cultivation, fertilization or irrigation has a notable impact on modern agricultural production. By obtaining useful information about the growth of horticultural crops through images, the soil water balance can be accurately estimated to achieve accurate irrigation planning. Computer vision applications provide valuable information about the irrigation management water balance. A vision-based system can process multi-spectral images taken by unmanned aerial vehicles (UAVs) and obtain the vegetation index (VI) to provide decision support for irrigation management.

UAV Farmland Monitoring

Real-time farmland information and an accurate understanding of that information play a basic role in precision agriculture. Over recent years, UAV, as a rapidly advancing technology, has allowed the acquisition of agricultural information that has a high resolution, low cost, and fast solutions. UAV platforms equipped with image sensors provide detailed information on agricultural economics and crop conditions (for example continuous crop monitoring). UAV remote sensing has contributed to an increase in agricultural production with a decrease in agricultural costs.

Yield Assessment

Through the application of computer vision technology, the functions of soil management, maturity detection and yield estimation for farms have been realized. Moreover, the existing technology can be well applied to methods such as spectral analysis and deep learning. Most of these methods have the advantages of high precision, low cost, good portability, good integration and scalability and can provide reliable support for management decision making. An example is the estimation of citrus crop yield via fruit detection and counting using computer vision. Also, the yield from sugarcane fields can be predicted by processing images obtained using UAV.

Animal Monitoring

Animals can be monitored using novel techniques that have been trained to detect the type of animal and its actions. There is much use for animal monitoring in farming, where livestock can be monitored remotely for disease detection, changes in behavior, or giving birth. Additionally, agriculture and wildlife scientists can view wild animals safely at a distance.

Farm Automation

Technologies such as harvest, seeding, and weeding robots, autonomous tractors, and drones to monitor farm conditions and apply fertilizers can maximize productivity with labor shortages. Agriculture can also be more profitable when the ecological footprint of farming is minimized.

If you are interested in any of the above examples and would like more information, please contact us. We would be happy to assess your situation and answer any questions you might have. If you would like to set up a Zoom meeting, click here. If you prefer to contact us through any other means, you will find our complete contact information here.